The Gap Between Tutorial and Reality

Every Kubernetes tutorial follows the same script: create a deployment, expose a service, scale up, scale down. It all works perfectly in minikube. Then you deploy to production, and suddenly you're dealing with node failures, networking mysteries, and costs spiraling out of control.

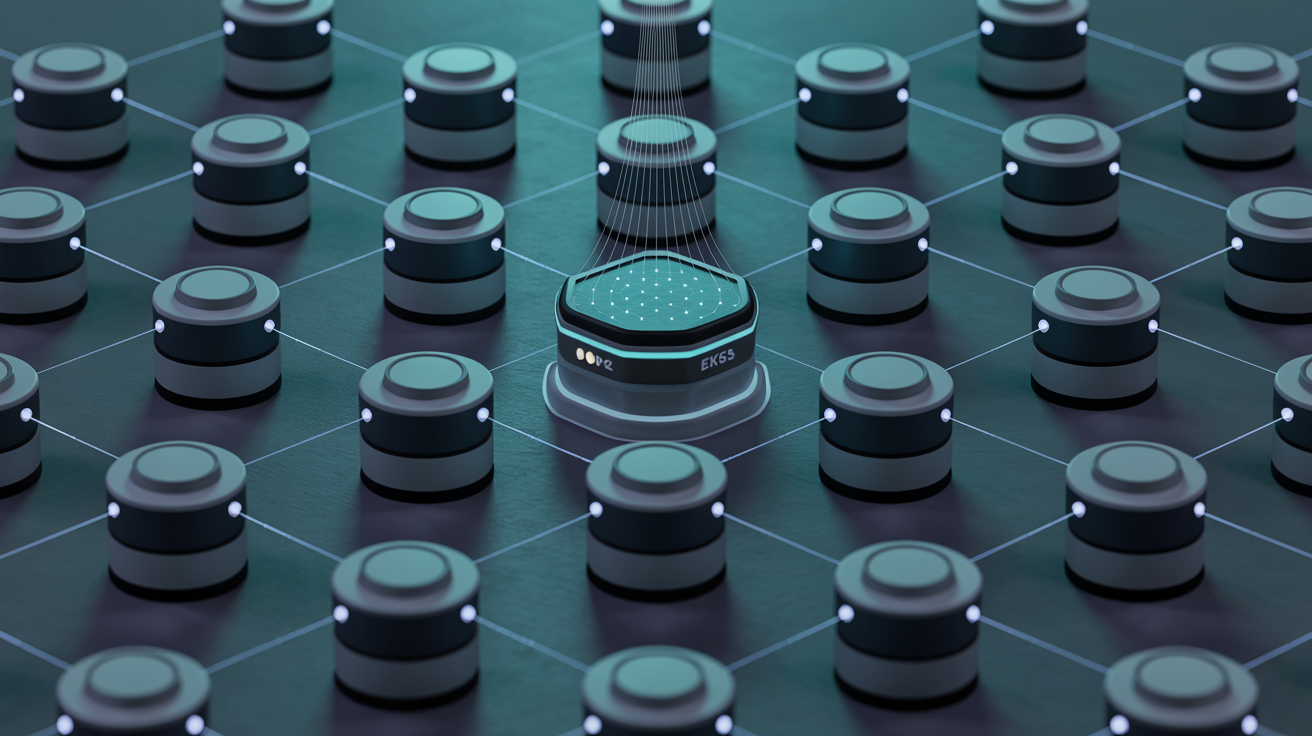

I've operated EKS clusters handling millions of requests across three organizations. Here's what actually matters in production.

Cluster Architecture Decisions That Haunt You

Your first decision—how many clusters to run—will affect everything downstream. The "one big cluster" approach seems simpler but creates blast radius problems. When that cluster has issues, everything goes down together. Multi-tenant security becomes a nightmare of RBAC policies and network policies that nobody fully understands.

My recommendation: one cluster per environment per team. Yes, it's more clusters to manage. But EKS handles the control plane, and the isolation benefits outweigh the overhead. A staging cluster issue never affects production. Team A's misconfiguration can't take down Team B.

The Node Group Strategy That Saves Money

Most teams run a single node group with one instance type. This is leaving money on the table. Here's the pattern I use:

Base node group: On-Demand instances for critical workloads that can't tolerate interruption. Size this for your minimum steady-state load.

Burst node group: Spot instances across multiple instance types and availability zones. Configure Karpenter or Cluster Autoscaler to use this for additional capacity.

The key insight is spreading Spot instances across instance families. If you only use m5.xlarge Spot, you're vulnerable to capacity shortages in that specific type. Use m5, m5a, m6i, c5, and r5 all as alternatives. When AWS runs low on one, you seamlessly get another.

Observability: The Non-Negotiable Foundation

Before deploying a single application, you need observability. Not after. Before. I've seen teams deploy to Kubernetes, have issues, and then frantically try to add monitoring while the incident is ongoing. Don't be that team.

Day zero requirements: Prometheus for metrics (or CloudWatch Container Insights if you want managed). Fluent Bit for logs to CloudWatch or your preferred destination. AWS X-Ray or Jaeger for distributed tracing. Alerting rules for node health, pod health, and resource utilization.

Configure these before anything else runs on the cluster.

The Security Posture Most Teams Skip

Here's a sobering exercise: run kubectl auth can-i --list as your default service account. In most clusters, the answer reveals far too many permissions. Default service accounts often have cluster-wide read access, sometimes write access.

Production security checklist:

Disable automounting of service account tokens by default. Create specific service accounts per application with minimal RBAC. Use Pod Security Standards (the replacement for Pod Security Policies). Enable network policies to restrict pod-to-pod communication. Use IRSA (IAM Roles for Service Accounts) instead of node-level IAM roles.

The Deployment Strategy That Prevents Outages

Default Kubernetes deployments use rolling updates, which work fine until they don't. The problem: Kubernetes considers a pod "ready" the moment readiness probes pass. But your application might still be warming caches, establishing connections, or loading data.

Add pre-stop hooks with sleep delays. Configure PodDisruptionBudgets. Use Argo Rollouts for canary deployments with automatic rollback based on metrics. These aren't optional for production—they're the difference between smooth updates and customer-facing incidents.